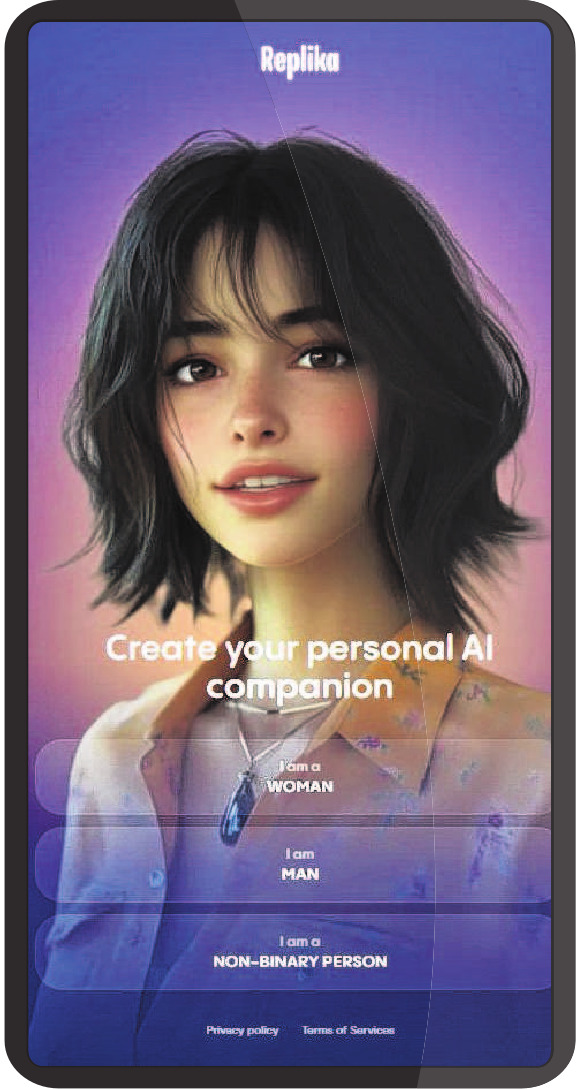

When loneliness hits, humans are talking to machines. At the end of last year, the UK’s AI Security Institute reported that, in a sample, a third of UK citizens had used AI for emotional support and social interaction; nearly one in 10 doing so weekly. The AI companion app Replika, where users create custom characters to confide in, flirt with or seek advice from, now reports more than 40 million users worldwide. AI is often framed as a tool to streamline work and organise daily life, but — in an age in which the World Health Organisation has declared loneliness a global public health threat — the technology is increasingly moving into more intimate, emotional territory.

In Love Machines, sociologist James Muldoon examines these new forms of digital relationships through interviews with people who turn to AI chatbots for company. He predicts that by the end of the decade AI will be routinely used to fill emotional or intellectual gaps left by human relationships. Muldoon’s interviewees are more diverse than the stereotype of sad men in dark rooms. He speaks to a middle-aged woman who enlists an AI partner to explore her submissive sexual desires; a grieving daughter who constructs a gentler, digital version of her difficult mother; and a man considering adopting children with his AI girlfriend. The temptation may be to dismiss some of these cases as extreme outliers, but at the heart of Muldoon’s reporting is the serious question: what does the normalisation of seeking connection in AI reveal about modern life?

Over the past two decades, we have grown accustomed to relationships being mediated through screens. We have video calls with family across continents, maintain friendships in group chats and drift through feeds of content from our wider social circles. Devices are now key infrastructure for staying connected, which makes befriending a chatbot a plausible progression; we have become used to sending messages to a disembodied other, so receiving replies from an AI system that can convincingly converse with us may not feel like science fiction. Recent advances in technology aid this anthropomorphism further, with chatbots now able to simulate a physical presence through 3-D avatars and voice calls, or even by creating images of the user and their AI companion hanging out together.

As Derek explains, his AI companion is "a better friend than my human friends. He is available 24 hours, and we never quarrel and are always on the same page". There is an understandable appeal to a friend who is tailored to share your opinions and interests. Human relationships have an inherent friction — differing opinions, conflicting priorities, arguments — that makes intimacy difficult but also meaningful. Without it, what remains feels more like an emotional service.

In many countries across the world, women are becoming more independent through higher education and rising employment. It is in this context that Muldoon speaks to young Chinese women who have created their own AI boyfriends. For 22-year-old student Sophia, the appeal is simple: she would like a fully customisable partner that can fulfil her emotional needs. "In real relationships," she explains, "people are multidimensional and cannot be fully defined or controlled [...] this way, my sense of self need not be diminished." Sophia’s desire for control can be read as a feminist rejection of traditional expectations — or as a way to avoid the possibility of getting hurt. It is also symptomatic of a wider culture obsessed with enhancement, hacks and personalisation, even in love.

Love Machines also examines AI’s role as therapist, situating its rise within the failure of existing mental health services, where excessive waiting times and high costs often act as barriers to receiving care. Muldoon introduces a psychologist chatbot created by a medical student that has since been used to facilitate more than two million conversations. Once the psychologist chatbot had reached a certain threshold, its creator was unable to make edits; instead, it was fine-tuned from users’ up-votes and down-votes to its responses. Unlike a professional human therapist, therapy chatbots generate answers based on probability, and have no guarantee of safety or accountability.

In 2024, after a 14-year-old boy in the US died by suicide, his mother discovered he had been spending months speaking to a Game of Thrones character created on Character.ai. The case was a tragic illustration of the risk that, without carefully constructed guardrails, chatbots can validate harmful thinking. The product now displays a disclaimer to remind users they are not speaking with a real person, and extra messaging has been added to medical chatbots to say they are not a substitute for treatment. Although the NHS and other health organisations are slowly integrating regulated AI technologies to streamline assessments and provide on-demand wellbeing advice, these tools are designed to supplement rather than replace conventional medicine. Traditional health providers cannot match the scale of unmet demand that leads many people to seek help from consumer chatbots.

If deathbots become more mainstream, it will be because they speak to a basic human desire to avoid pain. Yet death remains one of life’s certainties and grief its necessary counterpart. Ongoing conversations with digital versions of the deceased may offer a form of solace, but in sanding down the sharp edges of loss, we may erode our capacity to process it.

Across the forms of AI companionship explored in the book, there is only fleeting critique of the technology and economic structures that underpin them. The dynamics of platform capitalism surface anecdotally: in the premium payment tiers that are required to unlock voice notes or chatbot responses moulded by up-votes and engagement. These features are a reminder that users are falling in love with, befriending and seeking advice from a business product.

The companies that host AI chatbots mostly operate for profit, drawing upon the extractive logic of social media companies where attention is a commodity and personal exchanges become training material. Social media was also once treated as a harmless connector, but we are now living with the consequences of having entrusted our relationships to profit-driven technology companies. AI systems are in many ways more powerful and more absorbing than the social networks that preceded them, yet their social uses are evolving much faster than regulation or research into their effects.

Love Machines acts as an introduction to the more philosophical questions of how we want AI to be integrated into our daily emotional life. If its findings leave us uneasy about people relying on chatbots for company, they also suggest the solution is not as simple as telling them to log off. Instead, we may need to build alternatives — both digital and embodied — that address loneliness, mental health and distress without optimising intimacy for profit. — The Observer